The Hidden Accounting Risks Shaping the AI Economy

Hello everyone,

Today, I want to share a preview of the three largest areas of technical accounting risk in AI that I cover in detail in my upcoming white paper. These risks are already influencing revenue forecasts, product strategy, and deal structuring, and I will outline the top three shortly. Before that, a few important updates and shout-outs for the year ahead.

First, my fall semester at SJSU is wrapping up, and I have several exceptionally hard-working and talented students in finance, accounting, and business who are seeking internships. If you have opportunities or would be open to mentoring, please reach out and I will gladly connect you.

Next, I want to highlight three student-run businesses that deserve your support. We are only as strong as the community we build, and these students are creating real value through their work.

Pascal Beck - BaySide Nursery - Link here

Elyas Rasti - Moe’s Mobile Break Repair - Link here

While I love seeing these businesses launch, I spend the rest of my time analyzing businesses at the opposite end of the spectrum, the massive scale of the AI economy.

My capacity for next year is nearly full. Alongside publishing my book, I will continue my advisory partnerships with PCG and TABot, lead a Miles Masterclass, lecture at SJSU through 2026, and continue my partnership with the legendary Angela at GAAPSavvy as we work with Klarity to complete certification in their financial-transformation AI tools.

If you would like me to speak at your company about AI adoption, set up a retainer for technical accounting and revenue architecture, or support leadership development within your finance organization, please reach out soon to reserve time. A quick message is enough to get things moving. When I have the bandwidth, I am always happy to support however I can. Otherwise, thank you for continuing to follow this newsletter, share feedback, and offer the encouragement I hear from so many of you in person.

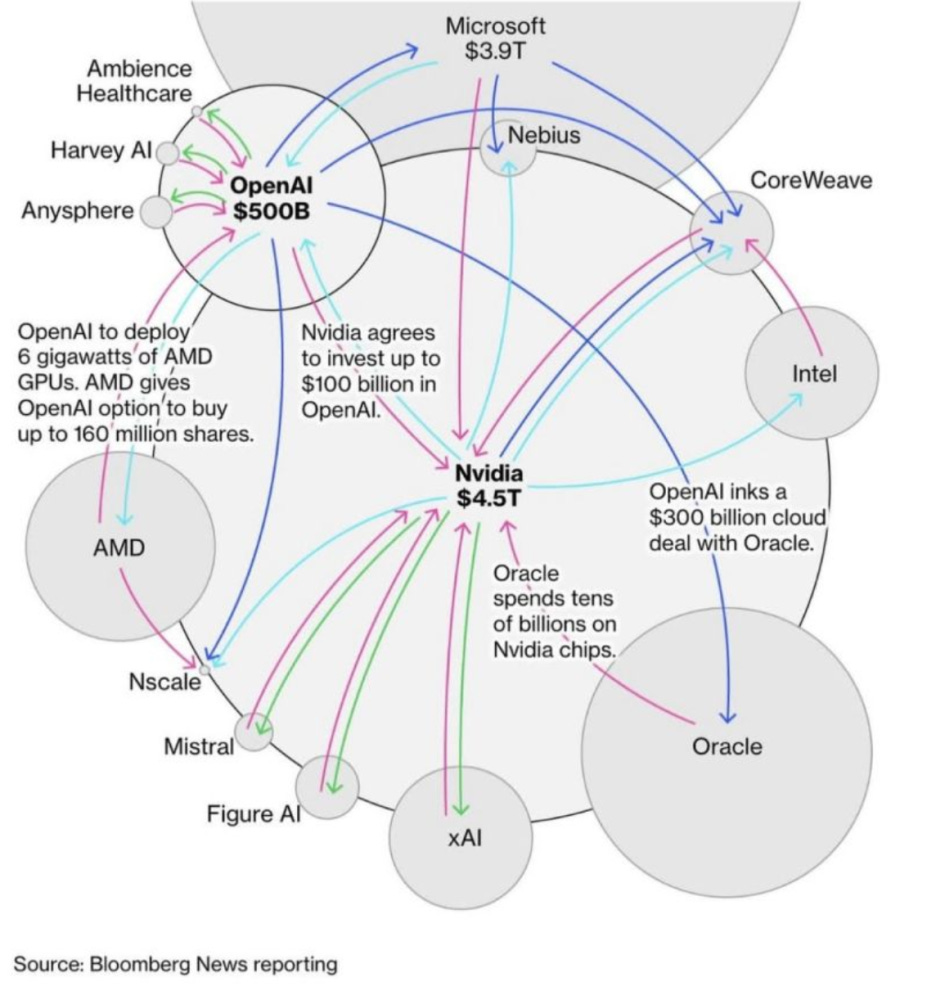

Now, here is a brief look at the top technical accounting risks emerging in AI from a finance and forecasting perspective. Specifically, I will be covering what I view as the largest risks in the chart below.

If you find this helpful for your own internal planning, please comment white paper below along with any areas you’d like me to explore, so I can cover them all in my upcoming white paper.

Risk #1: The Collectability Fragility Underpinning AI Revenue

Under revenue recognition guidance, companies may only recognize Remaining Performance Obligations (RPO, often referred to as backlog) and revenue when collectability is probable of being substantially collected. In ASC 606 (the technical accounting literature where you assess revenue contracts), “probable” aligns with roughly a 75 percent likelihood of collecting at least 90 percent of committed consideration. More importantly, a contract does not legally exist under ASC 606 unless collectability is probable at inception. If this threshold is not met, revenue recognition is constrained until sufficient evidence of collectability emerges.

Now imagine a hyperscaler entering into a ten-year, one-billion-dollar agreement with an AI company. On day one, the hyperscaler must determine whether it is highly probable that the customer will ultimately pay. How is that conclusion formed?

Traditionally, the starting point is historical performance across smaller, recurring contracts. Credit evaluations rely on past spend, credit scores, and broader indicators of financial health. The challenge today is that many AI companies entering into these mega commitments have almost no operating history, and definitely none at the scale of the commitments, to analyze.

The second option is reviewing financial statement information: burn rate, solvency, liquidity, and the customer’s ability to raise future capital. But most of the AI companies signing these contracts are private, unaudited, and opaque. The data simply does not exist at the level needed to support a robust collectability assessment.

As a result, companies are left to infer. They rely on public announcements, fundraising rounds, partial or non-audited financials, and management representations. These inferences become the backbone of a judgment that must support five-year, ten-year, or even longer term commitments. The forward-looking uncertainty is enormous.

In practice, major hyperscalers and chip manufacturers appear to be treating these commitments as fully collectible, placing them into RPO or backlog and recognizing revenue when usage occurs. If the consideration is nonrefundable, the revenue mechanics may technically hold. The broader issue is that a significant portion of reported growth, and therefore market valuation, is now tied to the longevity of AI labs with limited operating history and extreme burn rates.

Consider an AI lab currently spending two billion dollars annually on a hyperscaler. If that company fails to raise capital, its spend can collapse from two billion to zero overnight. Remaining Performance Obligations would need to be reduced, future revenue and cash flow forecasts would need to be revised, and the hyperscaler’s growth trajectory could shift materially. These risks are not meaningfully reflected in current models.

If some AI labs are announcing over one trillion dollars in cumulative commitments, the natural questions become: How many will survive long enough to honor these contracts? How many will fail or be absorbed through consolidation? And what happens to cash flows and valuations if even a portion of these commitments evaporate?

In my view, the risk is substantial unless one of three outcomes occurs: a government backstop, strategic acquisitions that absorb the obligations, or public-market fundraising that shifts the risk to investors. Each path carries ethical, financial, and practical concerns, and none fully eliminates the underlying exposure.

This is one of the most significant accounting and forecasting risks emerging in the AI economy, and finance leaders should begin treating it as such.

Risk #2: Reciprocal Commitments

The next issue emerging in these arrangements, aside from the sheer scale of the commitments, is the reciprocal nature of the relationships. Under ASC 606, this is addressed through the guidance on “payments to a customer.” Historically these arrangements were known as supplier purchases or balance-of-trade initiatives. They were always present in the SaaS and software world, but never at the scale or visibility we are seeing today.

For illustration, imagine I am a hyperscaler. An AI lab enters into a ten-year, one-billion-dollar commitment for compute. In return, I enter into my own ten-year, one-billion-dollar commitment to use that AI lab’s models.

This is not the same as two parties swapping cash to artificially inflate revenue. It’s not me giving my friend $100 today, them giving it back to me tomorrow, and us both saying we have $100 in sales. Real products and services are exchanged, and both may have legitimate commercial value. The key questions under ASC 606 are whether the products are actually being used and whether the commitments are priced at fair market value.

The guidance requires a company to evaluate any payment to a customer as potential contra-revenue. If neither party actually uses the goods or services and the payments simply circulate, there is no commercial substance and neither party should recognize revenue or RPO at all.

If the services are used but one party pays materially above fair value, the excess must be treated as a reduction of revenue. For example, if I pay one billion dollars for services that are worth one hundred million, nine hundred million would be treated as contra-revenue and removed from RPO.

In theory this is straightforward. In practice, it presents enormous challenges. From an accounting and auditing perspective, how do we determine whether the commitments are being used, or what the fair market value of the usage truly is? With rapid changes in chips, architectures, model capabilities, and competitive dynamics, the value of a ten-year commitment can shift dramatically. Companies themselves acknowledge that today’s pricing and use cases may be obsolete within a few years.

Another challenge is verifying actual productive use. A company may “burn” compute capacity simply to satisfy a commitment. A hyperscaler may technically deploy an AI model without using it anywhere near its full potential, analogous to buying software and leaving it on the shelf. How would an auditor substantiate true utilization at the level required to validate revenue recognition? The complexity of these transactions is extremely high, while the available accounting evidence and resources is extremely thin.

These reciprocal commitments also create distorted incentives. Both parties benefit from reporting larger sales, larger RPO balances, and accelerated growth trajectories. This dynamic makes over-commitment and over-consumption more likely, even when the net economic benefit is marginal.

The underlying products and use cases are real, so the risk here is smaller than the collectability issue. But it is still significant and increasingly relevant as deal structures grow larger, longer, and more intertwined.

Risk #3: Cross-Investments, VIE Pressure, and the Shadow of Consolidation

Another emerging issue is the rapid increase in cross-investments among AI companies, hyperscalers, chip manufacturers, and model providers. Many of these equity positions are intentionally structured to remain below thresholds that would trigger significant influence or consolidation. Under U.S. GAAP, an investor holding less than twenty percent of the voting interests in another entity is generally presumed not to have significant influence unless qualitative factors indicate otherwise. When significant influence is absent, and when the entity is not consolidated under ASC 810 (Variable Interest Entities and Voting Interest Entities), the investment is typically accounted for under ASC 321 as an equity security. These investments are carried at fair value, with changes recognized in earnings each reporting period.

This raises two important questions: how valuation is determined and whether significant influence exists in substance, even when not in form.

Valuation. Many of these AI investments lack observable cash flows or reliable discounted cash flow inputs. As a result, companies rely heavily on valuations from other investors, recent funding rounds, and comparable transactions, many of which involve the same small circle of strategic investors. Fair value becomes a function of market sentiment rather than economic fundamentals. When sentiment shifts, these valuations will move directly through earnings under ASC 321, creating income-statement volatility and potential impairment waves.

Significant Influence. A second, more structural concern is how close some companies are operating to the boundaries of consolidation. Several arrangements appear designed to avoid triggering the VIE guidance, despite providing capital, strategic influence, and reciprocal commercial commitments that resemble substantive involvement. Consider the implications if a hyperscaler were required to consolidate an AI lab: the burn rates, long-term obligations, and multibillion-dollar compute commitments would dramatically alter the hyperscaler’s financial statements, margins, and valuations. Companies are highly motivated to avoid this outcome, yet continue to deepen economic exposure through investments and commercial agreements.

The combination of under-valued risks, equity positions measured through sentiment-driven fair values, strategic involvement approaching significant influence, reciprocal purchases, and potential backstop arrangements, suggests consolidation risk is meaningfully higher than most auditors or investors currently appreciate. Investors should rigorously model “what if” consolidation scenarios and assess how they would affect earnings, cash flows, and enterprise value.

This is an area where accounting, strategy, and market structure intersect, and where greater scrutiny is warranted.

Why These Risks Matter: The Gap Between GAAP and Reality

My point in raising these issues is not to declare a “bubble,” but to ensure that finance leaders understand the technical accounting complexities that may be influencing markets, valuations, and long-term decision making. These arrangements require a deeper level of sophistication across finance, technical accounting, and deal structuring. Having expertise in your corner, someone who can help you model these risks, evaluate multiple scenarios, and structure commitments ethically and within technical guidelines, will matter even more as these transactions continue to grow in scale and scrutiny.

It is important for everyone to understand the complexity of the relationships, the limitations of internal accountants and external auditors, and the reality that auditors provide reasonable assurance, not guarantees, over the financial statements. Their procedures are designed to assess material misstatement, not to validate the long-term economics or strategic wisdom of highly novel arrangements. Under PCAOB standards, auditors rely heavily on management’s estimates for fair value, commercial substance, and disclosure judgments, especially when observable inputs are limited. As a result, many of the issues outlined here, valuation sensitivity, reciprocal arrangements, consolidation exposure, fall into areas where auditors depend substantially on management assumptions and representations.

Recommendations: How Finance Leaders Should Prepare

This places an even greater burden on companies to do their best to report the economics accurately, completely, and with clear, timely disclosures, rather than assuming auditors will surface or resolve every nuance. Companies have a duty to apply judgment rigorously, document it thoroughly, and disclose it transparently, especially when internal accounting teams may not have prior experience with the scale or structure of these AI-era transactions.

The strongest companies will proactively enhance their disclosures, provide clarity around the economic substance of these arrangements, and strengthen internal controls and governance over these judgments. There is also room for regulatory bodies, including the SEC, to consider whether additional disclosures or enhanced audit-procedure expectations are warranted for reciprocal commercial commitments, cross-investments, and related VIE exposure, given their growing influence on valuations and financial stability.

If you found this analysis helpful, I will be releasing a full white paper that goes much deeper into these topics, including scenario modeling, risk frameworks, recommended disclosure practices, and implications for forecasting and valuation. Until then, feel free to reach out if your team would benefit from a deeper dive or tailored analysis for your specific environment.